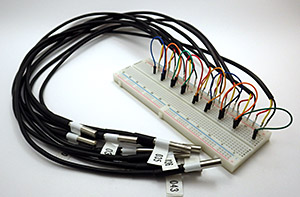

Give each sensor a serial number, but don’t label the sensor itself as I have in this photo or the labels will just fall off during the steam point testing. After adding crimp pins to the wire ends it becomes easy to gang them together on a breadboard for testing. Despite Maxim’s warnings, I had star configurations above 20 sensors reading well with them close together like this.

I’m probably not the first person to note that sensor calibration is one of the big differences between the mountains of data coming from the citizen science movement and that produced by research professionals. (…mea culpa…) After opening this can of worms, I think I am beginning to understand why: Accuracy calibration rapidly gets complicated, or expensive, and often it’s both at the same time. By the time you have what you need to do the job, the difference between a $0.30 sensor, and a $30 sensor, is pretty insignificant. So it’s no surprise that few people work on calibration methods for low cost sensors, or why normalization approaches are used instead.

But I am already spending far too much on this little hobby, so despite knowing that the folks over at Leighton Telescope managed to get their DS18b20’s to about ±0.01°C with a NIST traceable thermometer, I thought I would see how far I could get on my own. I suppose if I was an alpha geek, I would make my own platinum RTD and calibrate the sensors against that. But I’m not quite there yet. I should also point out that numbers are not my strong suit, so there could be some significant errors in what I have cobbled together here and I appreciate any feedback to help correct those…

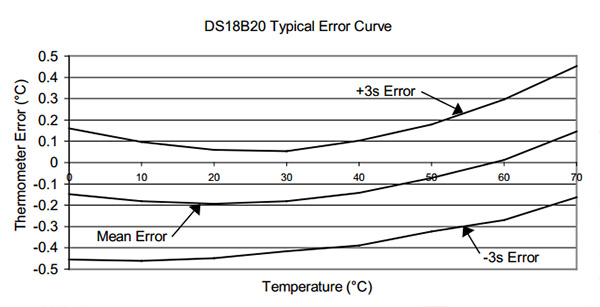

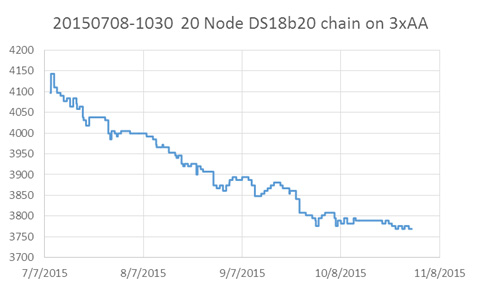

The first thing that occurs to me is: Can you read the temperature more accurately by averaging a bunch of these sensors together? If the readings from the sensors have a mean and standard deviation, then as the number of sensors increases then the standard deviation should decrease…right? The data sheet gives you a sense of how far you can get with that approach, because I assume that Maxim/Dallas used a very large number of sensors to derive their typical performance curve:

But if I understand what people say about this graph, the only reason the 3 sigma spread on that graph looks better than ±0.5 at 20°C is because the errors in the sensors used to derive that curve were truly random, and had a nice Gaussian distribution around that mean. However, since the actual batch of sensors I am holding in my hand is likely from the same production run, it is subject to systematic errors that don’t cancel each other out so nicely. And since I bought them on eBay, there is also a chance that they might be fake DS18b20’s. So I could have no idea how my mean error line was related to the one on Maxim’s graph.

But there are still useful things you can do with this kind of averaging:

The front temperature display on this clunky old Fischer Scientific was off by more than 2°C, and it was missing a foot. While it’s hardly a temperature chamber, the insulation and covering lid produced a slow cooling curve, so I could be reasonably confident the sensors were being exposed to the same temperatures. Don’t use data from the rapid heating cycle, because temperatures are likely to be unevenly distributed in the bath.

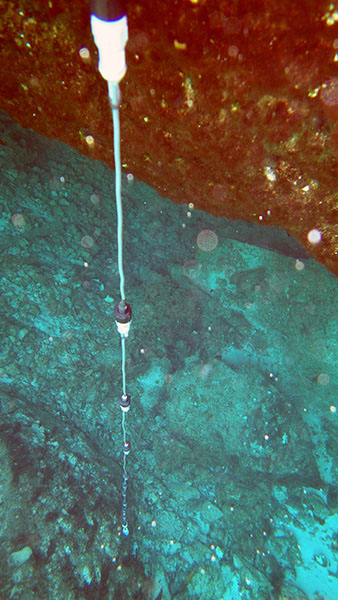

First of all you can get rid of the bad sensors by selecting a group that has a consistent behavior over the temperature range you are looking at, with readings that fall within the manufacturer’s specifications. To get enough data for this kind of assessment, I needed to run at least 10 sensors at the same time so that the average had some statistical weight. For this testing I picked up an old five Litre isotemp bath (you can find them for $25-$50 on eBay) but you could just as easily do this with hot water in a styrofoam cooler. With about 20 sensors on a breadboard in a star configuration (4.7k pullup), I brought the water bath up to a stable 40°C, and then moved the entire thing out into the fridge and left it logging during the cool down. The lid was on, and I had several towels over top to make the process go as slowly as possible. It took 12 to 24 hours for each batch of sensors to reach ~5°C.

With this data in hand, I looked at the residuals by subtracting each sensors raw reading from the average of all the sensor readings. This exercise sent one DS18 straight into the bin, as it was more than 2.5°C away from the rest of the herd for its entire record. Another was triaged due to a strange “hockey stick” bend in it’s residual around 25°C. I threw out the data from those two duds, and recalculated the average & residuals again. Just to be on the safe side I decided not to epoxy any sensors into a long chain if they were more than 0.3°C away from the average. (although I am still wondering whether eyeballing residuals like this is enough to exclude the right outliers?)

You can then normalize the sensors to each other by fitting a quadratic equation to a graph of each sensor & the overall average line. Excel can generate these coefficients for you with the linest function, or it can solve the quadratic with Goal Seek. But the easiest method I found was make a 2nd order (but not higher) fit with the chart tool’s trendline function. Make a scatter plot of the data with the averages on the Y axis, and data from one individual sensor on the X axis. Then right click on the data points to select them, and choose ( Add Trendline ) from the pull down menu, with the [ ] Display equation on chart tick box checked. (here is an example of the technique using an older version of Excel)

The equation you see displayed will convert that particular sensors output into corresponding temperatures on the average line. With this transformation, each sensor will yield the same reading if it is in the same thermal environment, and you can accept that any differences between two sensors in the chain represent real differences in temperature.

This kind of normalization is as far as most people go. However for the reasons I outlined above, we can’t be sure that we were using a valid sample for that mean data. In my tests it looked like I did not have an equal distribution of sensors above and below the average line, so I still didn’t really have a handle on whether this was improving the absolute accuracy. (I will post some example graphs of this later…)

That brought me to calibrating the DS18b20’s against intrinsic physical standards which rely on the fact that during a phase change (melting, freezing or boiling) adding and removing heat causes no change in temperature. In fact those heating curve plateaus are known so precisely that they use them at NIST to calibrate the expensive thermometers that I am trying to avoid buying. Today they do this with Gallium‘s triple point (29.7666 °C) and the triple point of water (0.010 °C), but they used to use Gallium’s melting point plateau. (29.7646 °C) Gallium sells for less than a buck a gram on Amazon and a density of about five grams per cubic centimeter means a block big enough to surround one of the DS18’s is almost within a DIY’ers budget. (100 grams will make a disk about two inches across and a quarter inch thick) But considering that commercial Ga melt cells cost about three grand, either that stuff is nasty enough to get me into trouble, or you need allot more more of it, at higher purities than you can buy on eBay to build one. Then there is the significant time it would take to refreeze the block again for every single sensor. And finally, all exposed metal must be carefully lacquered as Gallium will form an amalgam with many metals, and any dissolved metals will compromise the purity of the bath, shifting the melting point. And you would probably have to cover everything with Argon wine preserver.

So I went hunting for other substances I could use for a mid range calibration point and found several good boiling points such as: Ether (35 °C), Pentane (36.1°C), Acetone (56 °C), and Methanol (66 °C). Despite my enthusiasm over coffee the next morning, all of them were summarily rejected by my wife, who strongly suggested that I look for calibration procedures that do not create large amounts of highly explosive vapor. Given how unstoppable she usually is in the pursuit of good data, I was not expecting this outburst of common sense 🙂

So I looked at the other primary standard used to calibrate pt100’s. Turns out it is possible to make your own triple point cell, and if that’s not good enough for you, Mr. Schmermund also produced plans for a freezing point of mercury cell (–34.8 °C) (See: “Calibrating with Cold”, Shawn Carlson, Scientific American, Dec. 2000 issue). However the local 7-11 was fresh out of liquid nitrogen when I checked, and I had this gut feeling that risking mercury induced brain damage was not going to pass the cost/benefit analysis either. If I actually did need sub zero calibration I think I would try using Galinstan, (−19 °C) which is now replacing mercury in glass thermometers.

Pre-chilling the sensors in one corner of the bath makes the process much faster. Hold the sensors by the cable, not the metal sheath, or heat from your hands will affect the readings.

It was looking like calibrating against anything other than distilled water was going to take a substantial amount of effort compared to what I was seeing in the NIST and EPA videos. Most sources indicated that the ice point and steam points were at least an order of magnitude more accurate than my ±0.1°C target, making them suitable for the exercise.

While the overall procedure is pretty easy, it did help to practice a few times to get a sense of when I could trust the readings. Checking that you have just the right amount of water in your ice bath makes a big difference, and don’t run the sensors at full tilt or they will self heat. (I left 15 seconds between readings) Since errors on my part would cause the sensor to be warmer than the true ice point, I took the lowest reading, while stirring, as my final reading. The difference between stirring, and not stirring was usually 1-2 integer points on the sensors raw output (0.0625-0.13 C) and this was consistent for all the sensors.

If these sensors were linear then reading the ice point was a direct measure of the b in y=mx+b. And this got me wondering if one point calibration was enough all by itself. But once again my wet blanket science adviser assured me that nothing on those graphs told me if the offset was constant over the sensors range. Hrmph! (Although according to Thermoworks, ice point alone can be a good way to check for drift, because the most common error in electronic temperature sensors is a shift in the base electrical value)

I found a silicone vegetable steamer lid for the calibration that had three DS18B20 sized holes in it already. Getting the right pace for your slow rolling boil is important, and this lid sheds the condensed water back into the pot reasonably well. Alligator clips also help speed the process.

So I moved on to measuring the steam point. Water’s boiling point is not necessarily at 100°C and the only factor that is really involved in the variance is atmospheric pressure. Altitude is often given as an alternative when pressure information is not directly available and there are plenty of places to look up elevation and barometric pressure data (& converters) for the necessary corrections.

I already had some MS5805-02 sensors on hand, so with the help of Luke Millers library, I could read my local atmospheric pressure for the correction directly. The accuracy of my pressure sensor was ±2.5 mbar (similar to the more common BMP180) with the B.Pt adjustment equation being: Corrected B.Pt.=100 (°C)+((PressureReading-1013.25mbar)/30) So the 5 mbar total error range in the pressure sensor could change the adjusted boiling point by up to 0.166°C. This means that the error in my pressure sensor measurement is at least as significant as the other aspects of this procedure. Better than the default ±0.5°C, but it puts a limit on how accurate I will can get with my steam point measurement.

Cutting down the stacks on the Fred steamer lid allowed me to do multiple sensors at once. This saves time, but be careful or you will pay the piper with a few burned fingers when you change them out.

With each sensor just under the surface of the boiling water (since the evaporation process happens a little bit above 100°C) each one took about 5 minutes to warm up to reading temperature with the water on a slow to medium boil (and it was easy to see that on the serial monitor) I didn’t consider the test done till I saw at least a full minute of stable output (reading the sensor every 10 seconds) Since errors in my technique would produce readings on the cold side, I took the highest ‘frequently repeated’ number as the final reading. Most sensors settled nicely while some of them toggled back and forth by one integer point from one reading to the next.

In comparison to the steam point procedure, I trust the Ice point as more reproducible because it does not suffer from any pressure information dependency. Perhaps more important is the fact that 100°C is far away from my 20-30°C target range, leaving the possibility for significant errors if the sensors have a non-linearity problem.

With the ice and steam readings in hand, I could construct a two-point calibration for each of my DS18B20’s with slope M=Δy/Δx, and B=(the ice point reading). (explained here, and that left you in the dust there are lots of fill-in-the-blank spreadsheet templates on the web)

At this point I am still doing tests & chewing on numbers, but the standard deviations around the mean line are being reduced by this ice&steam point calibration. The problem is that even after I apply the resulting slope and intercept I still have significant residuals from a mean that is derived with the corrected numbers. I thought that the two point calibration would make the graphs the individual sensors line up very closely with one another, and that they would have nearly identical slopes(?) I am left wondering if larger sensor errors up at 100°C mean that I need to apply some additional process to normalize my sensors to each other in the 30°C range after doing the two point calibration. But using the process I described above would generate ‘b’ value corrections, and I am very reluctant to modify my y intercept numbers because I think using the ice point to measure that offset is robust. These doubts about accuracy of the steam point, the sensors linearity, and my lack of a nice “mid-range” standard to calibrate against, have me hunting for a method which would gracefully combine a single (ice) point calibration with normalization. And the dip in the datasheet’s mean error curve between 20-30°C implies that even after applying ice point corrections my average line will still be 0.05°C lower than actual (?)

Another important observation is that the means generated from the uncorrected data were within 0.14-0.16°C of the means calculated after applying the two point calibration. Either my sensors actually did have a reasonably normal distribution of error, or I might have missed something important. The implication in that first case is that normalization alone should improve your overall accuracy, but I still need to get my hands on a calibrated pt100 to know for sure….Argh!

Addendum 2015-05-18

Bil Earl just posted a beautifully written article on sensor calibration, which puts everything here into context. A great job once again by the folks over at Adafruit!

Addendum 2016-02-12

Just adding a quick link to a small post on the pre-filtering I do with these sensors, which I only posted because no one else seems to bother posting data on the ‘typical quality’ you see with the cheep eBay sensors. And after splashing out on a Thermapen reference thermometer ($200), I can try a multi-point calibration for these sensors that is closer to my target temperature range.

Addendum 2016-03-05

Just put the finishing touches on a new calibration approach which, compared to this ice & steam point method, was an order of magnitude faster to do. If you are calibrating a large number of sensors, the reference thermometer is definitely worth the investment.